Lifestyle

Share

Published 23:58 16 Jun 2022 BST

Updated 11:18 17 Jun 2022 BST

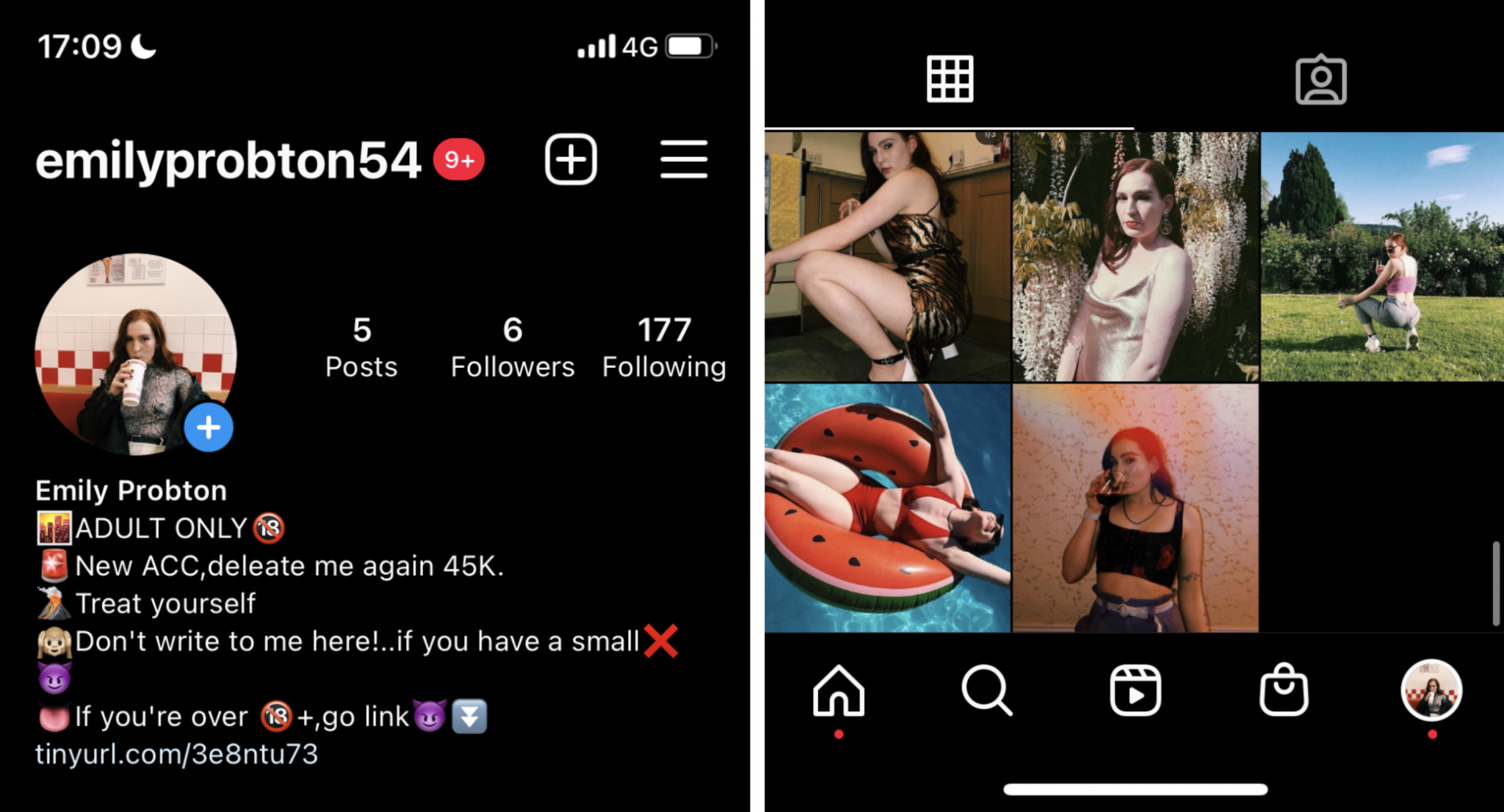

Firstly, to become a pornbot, you need to think like a pornbot. I went back on my personal Instagram and gathered a few pictures from when I was younger and less self-conscious, including a bikini pic and one where I’m suggestively drinking a glass of wine - Insta fodder which really screams “click on my dodgy link”.

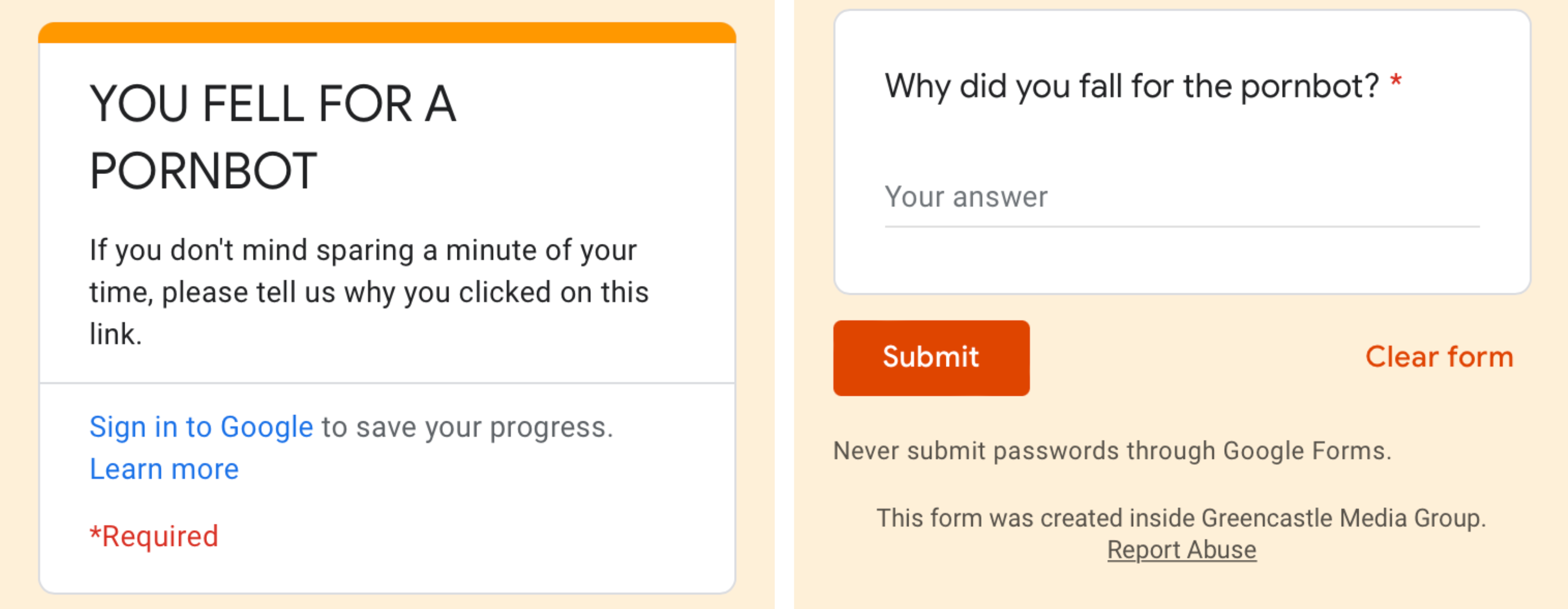

Pornbots’ handles are typically formatted as ‘first name, last name, random number’ and so I became @emilyprobton52 (a bog-standard female first name, and a last name which also happens to be an anagram for pornbot). I copied another pornbot’s bio and claimed my real account had been “deleted at 45K” - they always do, to look legit. Finally, I adorned my new profile with a dubious-looking link in the bio, except the link actually diverts people to a Google form asking them why they fell for such an obvious pornbot.

Firstly, to become a pornbot, you need to think like a pornbot. I went back on my personal Instagram and gathered a few pictures from when I was younger and less self-conscious, including a bikini pic and one where I’m suggestively drinking a glass of wine - Insta fodder which really screams “click on my dodgy link”.

Pornbots’ handles are typically formatted as ‘first name, last name, random number’ and so I became @emilyprobton52 (a bog-standard female first name, and a last name which also happens to be an anagram for pornbot). I copied another pornbot’s bio and claimed my real account had been “deleted at 45K” - they always do, to look legit. Finally, I adorned my new profile with a dubious-looking link in the bio, except the link actually diverts people to a Google form asking them why they fell for such an obvious pornbot.

Then I got to work being a pornbot. To be a successful pornbot, you need to specialise. Ever wonder what the Instagram accounts of Premier League football teams, online news sites, Formula One drivers and Kylie Jenner all have in common? Male followers. Oh, and pornbots. Thomas Platt, head of e-commerce at the bot management firm Netacea, which protects companies such as Sony, William Hill and All Saints from automated bot attacks, says this is no coincidence. “I mean if you’re looking for males, middle-aged and single…” he laughs. “It’s just like any form of advertising, the more targeted they can get the better the clicks. It would be naive to assume they’re not optimising who they’re targeting.”

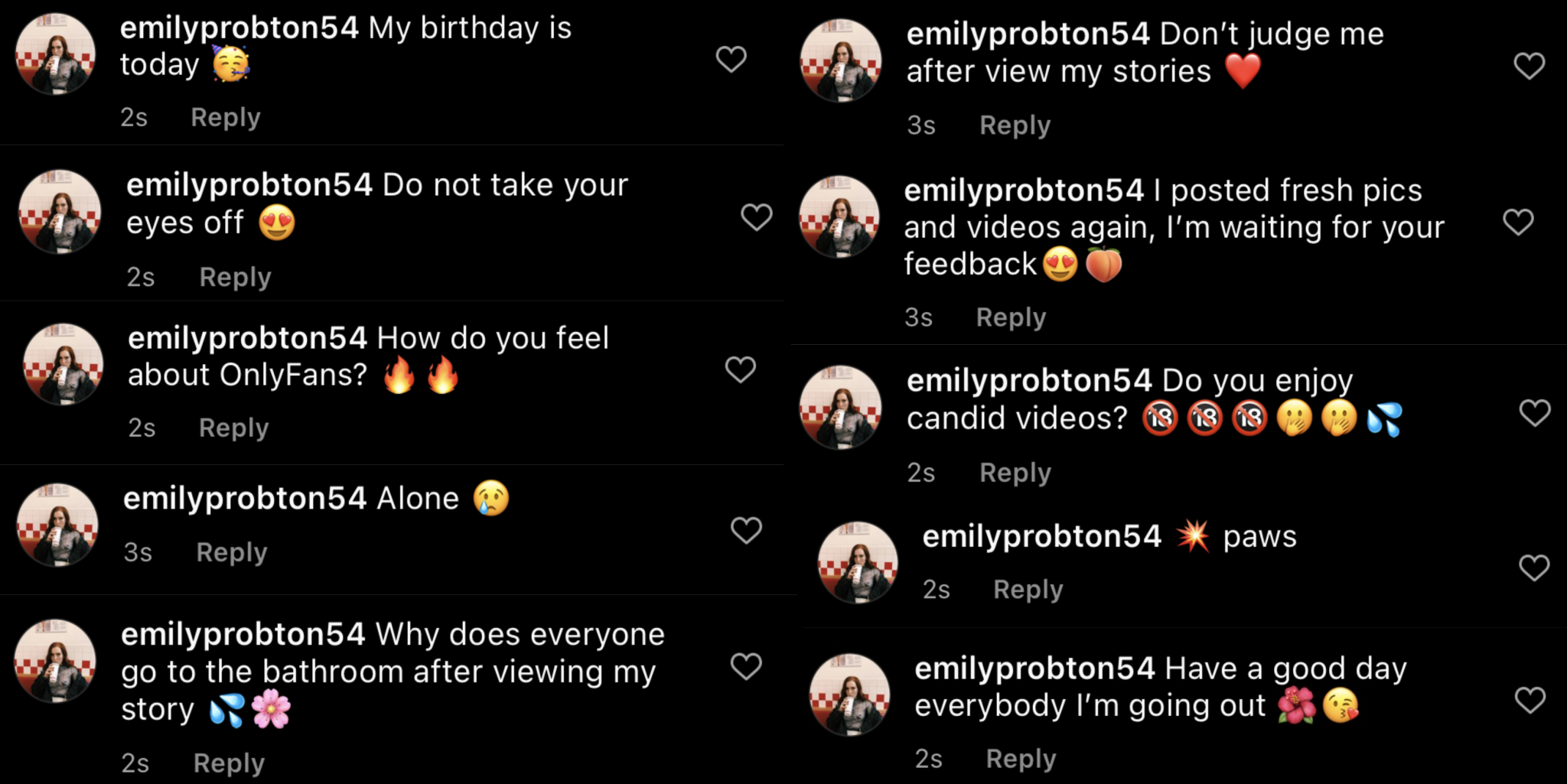

So I followed a bunch of people in the “followers” list of accounts like Manchester United, Manchester City, Chelsea, Formula One and the UFC. Then I added a bunch of horny, semi-literate comments on their recent posts - all stuff that I had noticed and documented other pornbots doing. I went from the basic (“I want to feel your arms around me”) to the overt (“I want to touch your banana”) to the straight-up inane (just the word “paws”... yeah they really use that). I commented tens of times on multiple pages, spreading my seed, laying the bait.

Then I got to work being a pornbot. To be a successful pornbot, you need to specialise. Ever wonder what the Instagram accounts of Premier League football teams, online news sites, Formula One drivers and Kylie Jenner all have in common? Male followers. Oh, and pornbots. Thomas Platt, head of e-commerce at the bot management firm Netacea, which protects companies such as Sony, William Hill and All Saints from automated bot attacks, says this is no coincidence. “I mean if you’re looking for males, middle-aged and single…” he laughs. “It’s just like any form of advertising, the more targeted they can get the better the clicks. It would be naive to assume they’re not optimising who they’re targeting.”

So I followed a bunch of people in the “followers” list of accounts like Manchester United, Manchester City, Chelsea, Formula One and the UFC. Then I added a bunch of horny, semi-literate comments on their recent posts - all stuff that I had noticed and documented other pornbots doing. I went from the basic (“I want to feel your arms around me”) to the overt (“I want to touch your banana”) to the straight-up inane (just the word “paws”... yeah they really use that). I commented tens of times on multiple pages, spreading my seed, laying the bait.

One of the people tasked with deleting suggestive comments like mine is Joel Bailey, co-founder of Arwen, an AI software that tackles spam comments on social media, including pornbots. Arwen’s clients include Manchester City football club, a Formula One team and British comedian Rosie Jones, who’s known for her panel appearances on comedy shows like The Last Leg and Would I Lie To You. The company was initially founded to tackle online hate after the slew of racist abuse that followed last year’s Euros when three England players failed to convert penalties. “Then our clients kept saying ‘We get all this spam - can you help us with that as well?’” Joel recalls.

Joel is what I like to call a pornbot hunter. With the help of his team at Arwen, he has formulated a system that catches pornbot comments like mine before Instagram users even see them. “Our USP is sub-second removal, so barely anyone sees it,” Joel says. “You stop all the risk of a [pornbot] pile-on by getting it early, and also the risk of people clicking on something that they shouldn’t.”

One of the people tasked with deleting suggestive comments like mine is Joel Bailey, co-founder of Arwen, an AI software that tackles spam comments on social media, including pornbots. Arwen’s clients include Manchester City football club, a Formula One team and British comedian Rosie Jones, who’s known for her panel appearances on comedy shows like The Last Leg and Would I Lie To You. The company was initially founded to tackle online hate after the slew of racist abuse that followed last year’s Euros when three England players failed to convert penalties. “Then our clients kept saying ‘We get all this spam - can you help us with that as well?’” Joel recalls.

Joel is what I like to call a pornbot hunter. With the help of his team at Arwen, he has formulated a system that catches pornbot comments like mine before Instagram users even see them. “Our USP is sub-second removal, so barely anyone sees it,” Joel says. “You stop all the risk of a [pornbot] pile-on by getting it early, and also the risk of people clicking on something that they shouldn’t.”

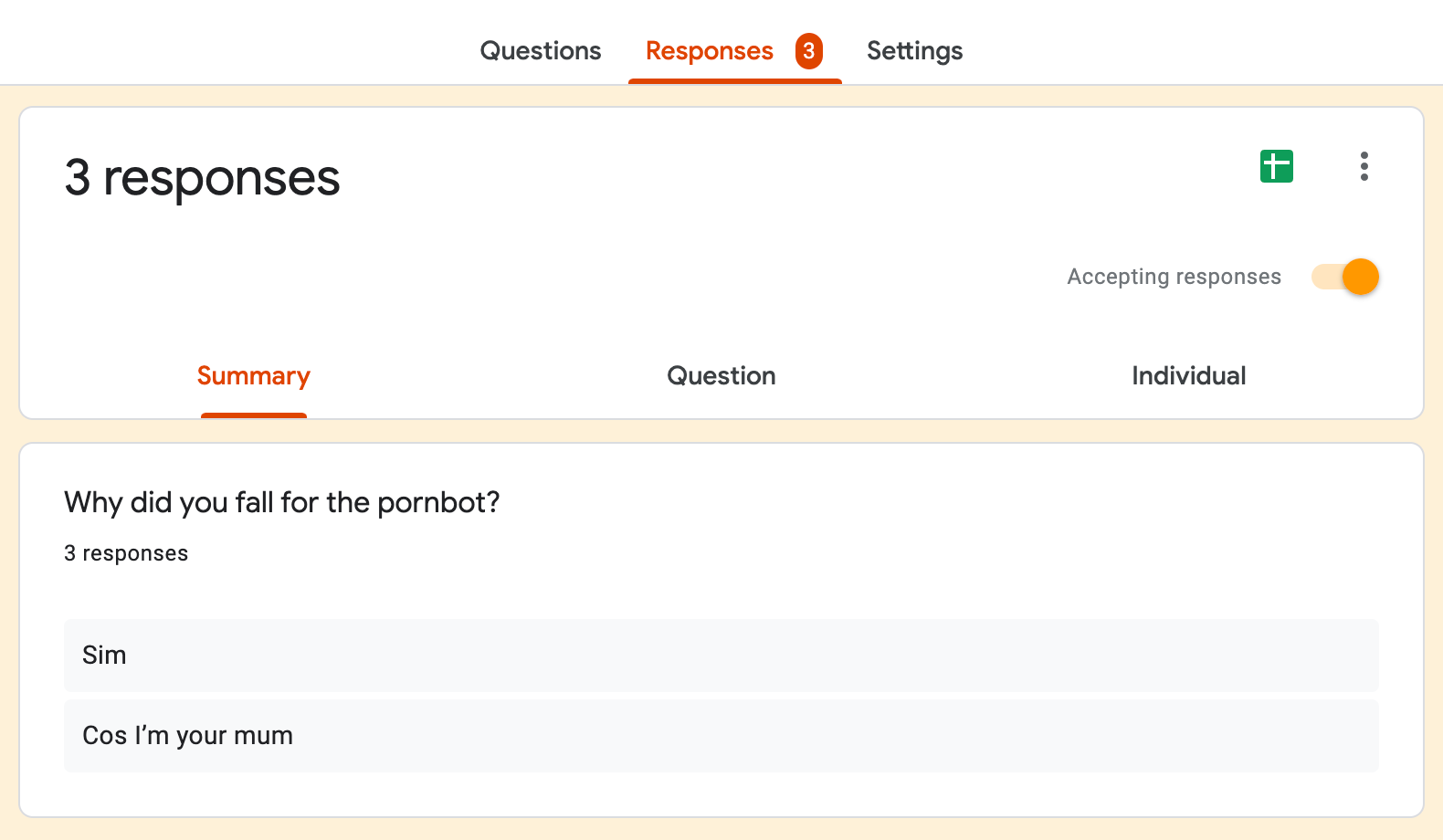

The issue with clicking on these links is that they don’t lead to a fun little investigative Google doc like mine - the only responses I got were “sim” (???) and “cos I’m your mum” - they’re more nefarious than that. Most likely, Platt says, they’re for making money. “Bots tend to do three things,” he explains: “Spread misinformation, genuine hacking, or ad fraud and click fraud.” He reckons pornbots are likely the latter: the people who create them are making money off dodgy links, like how an online advert makes someone money every time you click on it. There’s probably one on this page right now.

Related articles:

The issue with clicking on these links is that they don’t lead to a fun little investigative Google doc like mine - the only responses I got were “sim” (???) and “cos I’m your mum” - they’re more nefarious than that. Most likely, Platt says, they’re for making money. “Bots tend to do three things,” he explains: “Spread misinformation, genuine hacking, or ad fraud and click fraud.” He reckons pornbots are likely the latter: the people who create them are making money off dodgy links, like how an online advert makes someone money every time you click on it. There’s probably one on this page right now.

Related articles:

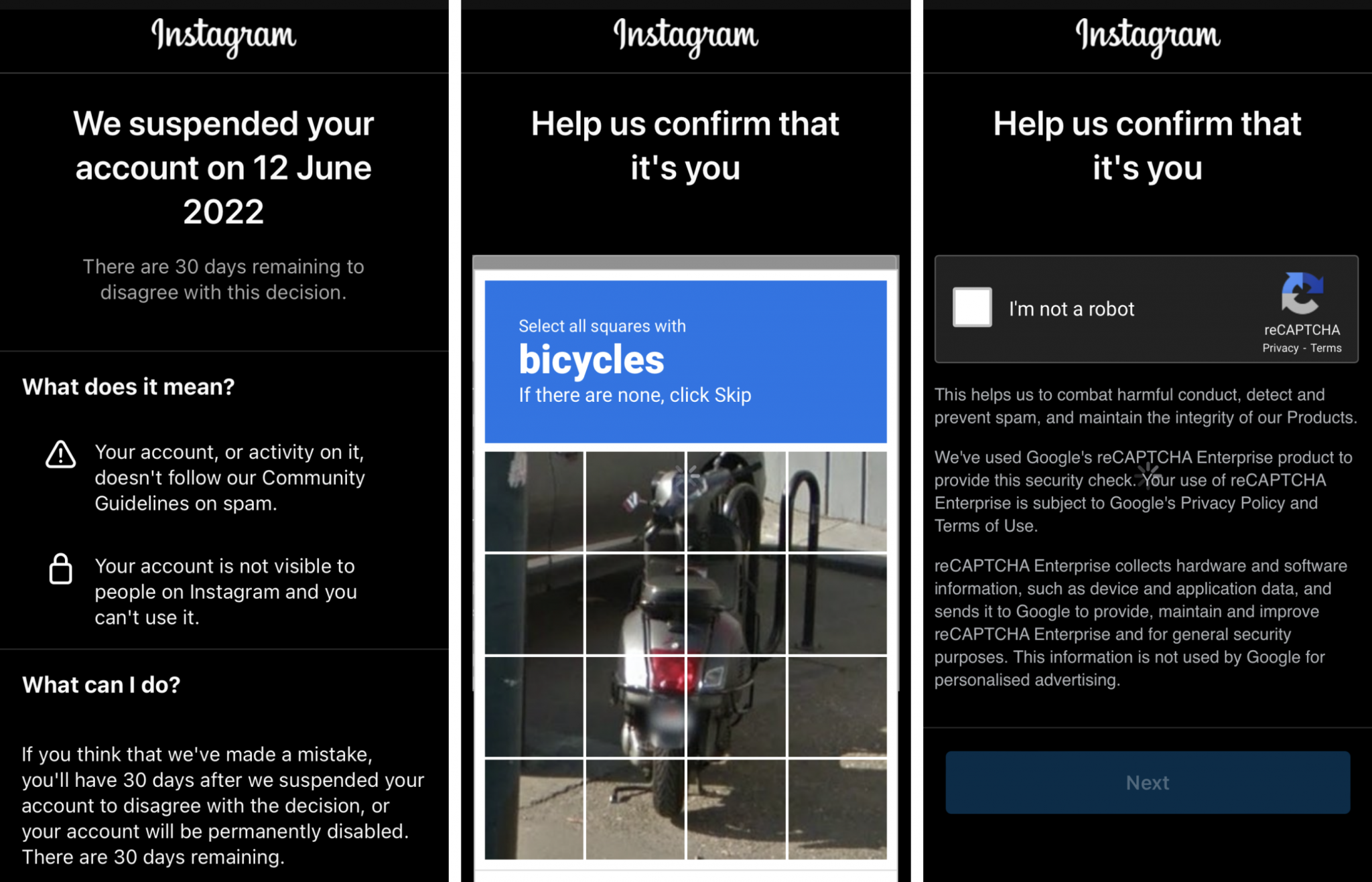

This may explain why every bot you see only follows around 150 accounts instead of two million, even though more follows would benefit the bot, and it’s because Instagram sniffs them out. However, since I was pretending to be a bot, I couldn’t do Instagram’s captcha questions. What I could do, though, is make another account straight away called @AliceProboton31 and do the exact same thing over again, just like a bot would. Delete one and they just pop right back up, like whack-a-mole.

“It’s a cat and mouse game,” says Bailey. For him, this game isn’t just an irritation - it’s become his livelihood. But companies like Arwen, of which there are very few (“We’re one of two or three in the UK, and probably one of six or seven globally,” says Joel), can’t automatically remove every pornbot on the platform. So there are thousands - potentially millions - still knocking about. And they’re more than just a nuisance that should be removed for the smooth functioning Instagram experience. In some cases, they’re actually harming real people.

Fortunately, I had a load of my own pictures I could use when pretending to be Emily and Alice Proboton. But had I been a real bot, lacking in skin, hair and, well, boobs, I’d have had to resort to stealing. This is the more insidious side of pornbots: they catfish as real people, stealing from their Instagrams - or more private places.

[caption id="attachment_342255" align="alignnone" width="1177"]

This may explain why every bot you see only follows around 150 accounts instead of two million, even though more follows would benefit the bot, and it’s because Instagram sniffs them out. However, since I was pretending to be a bot, I couldn’t do Instagram’s captcha questions. What I could do, though, is make another account straight away called @AliceProboton31 and do the exact same thing over again, just like a bot would. Delete one and they just pop right back up, like whack-a-mole.

“It’s a cat and mouse game,” says Bailey. For him, this game isn’t just an irritation - it’s become his livelihood. But companies like Arwen, of which there are very few (“We’re one of two or three in the UK, and probably one of six or seven globally,” says Joel), can’t automatically remove every pornbot on the platform. So there are thousands - potentially millions - still knocking about. And they’re more than just a nuisance that should be removed for the smooth functioning Instagram experience. In some cases, they’re actually harming real people.

Fortunately, I had a load of my own pictures I could use when pretending to be Emily and Alice Proboton. But had I been a real bot, lacking in skin, hair and, well, boobs, I’d have had to resort to stealing. This is the more insidious side of pornbots: they catfish as real people, stealing from their Instagrams - or more private places.

[caption id="attachment_342255" align="alignnone" width="1177"] Alecia is an OnlyFans creator who has had her pictures stolen by pornbots at least ten times[/caption]

“It’s happened to me at least ten times,” says Alecia, a 21-year-old OnlyFans creator living in Lincoln. “I once had an account posting pictures of a leak from my OnlyFans. And I was like… they’re literally getting away with posting porn, but if I post a picture of my cleavage they [Instagram moderators] are all over that? And they get a lot of views as well, one of the videos [the bot posted] had like 10,000 views.”

Instagram insists they don’t benefit from this type of activity and are actively trying to stop it. They have over 15,000 dedicated “content reviewers” as part of an even wider team, and use proactive strategies and anti-spam filters to detect this type of stolen content. A Meta spokesperson told JOE: “We want the content you see on Instagram to be authentic, and we block millions of fake and spam accounts every day. We continue to invest in anti-spam technology, and in our safety and security team of over 40,000 people, who are focused on keeping spam and other types of harmful content off our platforms.”

But at the time of writing Emily and Alice Proboton are still very much up and running. Hit them up if you want, or report them - who cares anyway, I can just make another one.

Alecia is an OnlyFans creator who has had her pictures stolen by pornbots at least ten times[/caption]

“It’s happened to me at least ten times,” says Alecia, a 21-year-old OnlyFans creator living in Lincoln. “I once had an account posting pictures of a leak from my OnlyFans. And I was like… they’re literally getting away with posting porn, but if I post a picture of my cleavage they [Instagram moderators] are all over that? And they get a lot of views as well, one of the videos [the bot posted] had like 10,000 views.”

Instagram insists they don’t benefit from this type of activity and are actively trying to stop it. They have over 15,000 dedicated “content reviewers” as part of an even wider team, and use proactive strategies and anti-spam filters to detect this type of stolen content. A Meta spokesperson told JOE: “We want the content you see on Instagram to be authentic, and we block millions of fake and spam accounts every day. We continue to invest in anti-spam technology, and in our safety and security team of over 40,000 people, who are focused on keeping spam and other types of harmful content off our platforms.”

But at the time of writing Emily and Alice Proboton are still very much up and running. Hit them up if you want, or report them - who cares anyway, I can just make another one. Explore more on these topics: